Documentation Index

Fetch the complete documentation index at: https://specterops-bp-2395-ms-sentinel.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Roles and permissions

To successfully deploy and use the Microsoft Sentinel integration, different Azure roles and permissions are required for various personas involved in the process. The following table outlines the key roles, their responsibilities, and the required permissions for each role:| Role | Responsibilities | Required permissions |

|---|---|---|

| Installer |

|

|

| Admin |

|

Optional:

|

| User |

|

|

Prerequisites

Before you begin the installation and configuration process, ensure the following prerequisites are met:- Active Azure subscription with permissions to deploy resources

- Microsoft Sentinel workspace (Log Analytics Workspace) in a target resource group

- BloodHound Enterprise tenant

- BloodHound Enterprise non-personal API key/ID pair

- Microsoft Entra ID application with the Monitoring Metrics Publisher role on the target resource group

Configure the integration

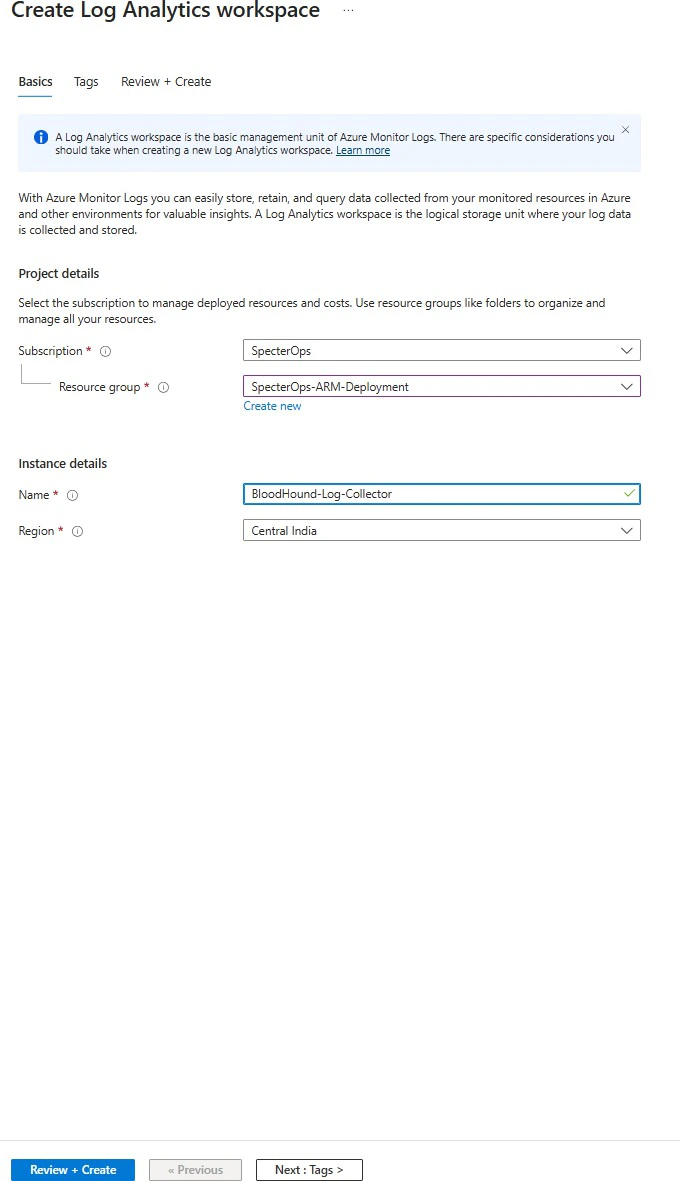

Follow the steps below to deploy and configure the Microsoft Sentinel integration for BloodHound Enterprise. This process involves deploying Azure resources, configuring authentication, and setting up data ingestion.Create a Log Analytics Workspace

Create a Log Analytics Workspace to store the data ingested from BloodHound Enterprise. This workspace will be connected to Microsoft Sentinel for monitoring and analysis.

- Log in to the Azure Portal with an account that has the necessary permissions for Microsoft Sentinel and Log Analytics Workspace configurations.

- Navigate to the Log Analytics Workspace and click Create.

-

Select subscription and resource group, then enter a workspace name.

- Click Review + create.

-

Add the Log Analytics Workspace in Sentinel:

- Navigate to Sentinel.

- Click Create.

- Select the newly created Log Analytics Workspace.

- Click Add.

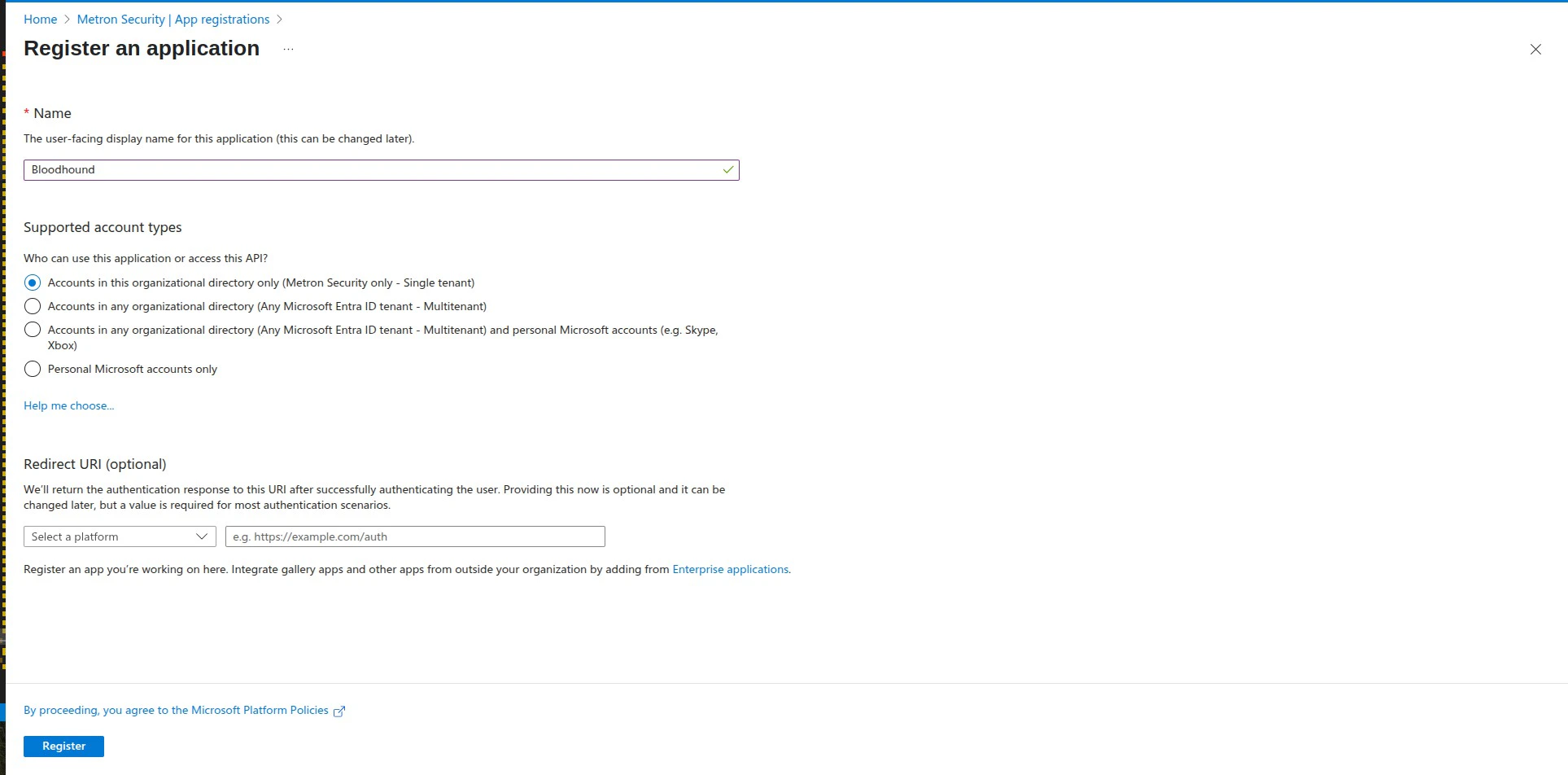

Register a Microsoft Entra ID application

Register a Microsoft Entra ID application to authenticate the data connector with Microsoft Sentinel. This application will be granted the necessary permissions to publish data to Sentinel.

- Open Microsoft Entra ID.

- Go to App registrations > New registration.

-

Enter an app name and choose Accounts in this organizational directory only. No redirect URI is necessary.

- Click Register.

- Copy the Application (client) ID and Directory (tenant) ID. You’ll need these later.

- Under Certificates & secrets, create a client secret and save its value immediately. It will not be shown again.

Assign required Azure role to the app

Assign the Monitoring Metrics Publisher role to the Microsoft Entra ID application on your resource group:

- Open your resource group.

- Go to Access control (IAM) > Add role assignment.

- Assign Monitoring Metrics Publisher to the Entra application.

- Select a user, group, or service principal to assign access to, then click Select members.

- Select the application that you created, then click Select.

- Click Review + Assign.

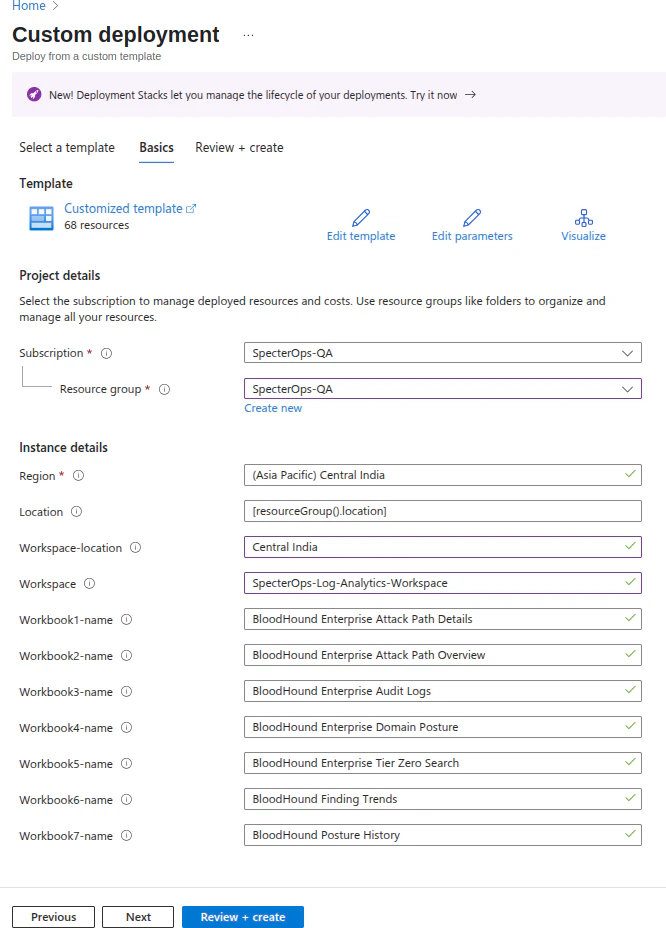

Deploy the workbook and analytics rules template

Before starting the deployment, go to the Log Analytics Workspace you created and note the name and location of the workspace. You will need this during deployment.

- Click the following link to open a preloaded ARM template in the Azure Portal: Deploy to Azure.

-

Confirm the template opens on the Custom deployment page.

Configure deployment parameters

Fill in the deployment parameters for the workbook and analytics rules template using the information from previous steps and your environment.

- Select the target subscription and resource group, then enter deployment parameters such as workspace name and workspace location.

- Click Review + create.

- Click Create to deploy the workbook and data connector resources.

Verify the deployment

Verify the workbook and analytics rules deployment before deploying the data connector template:

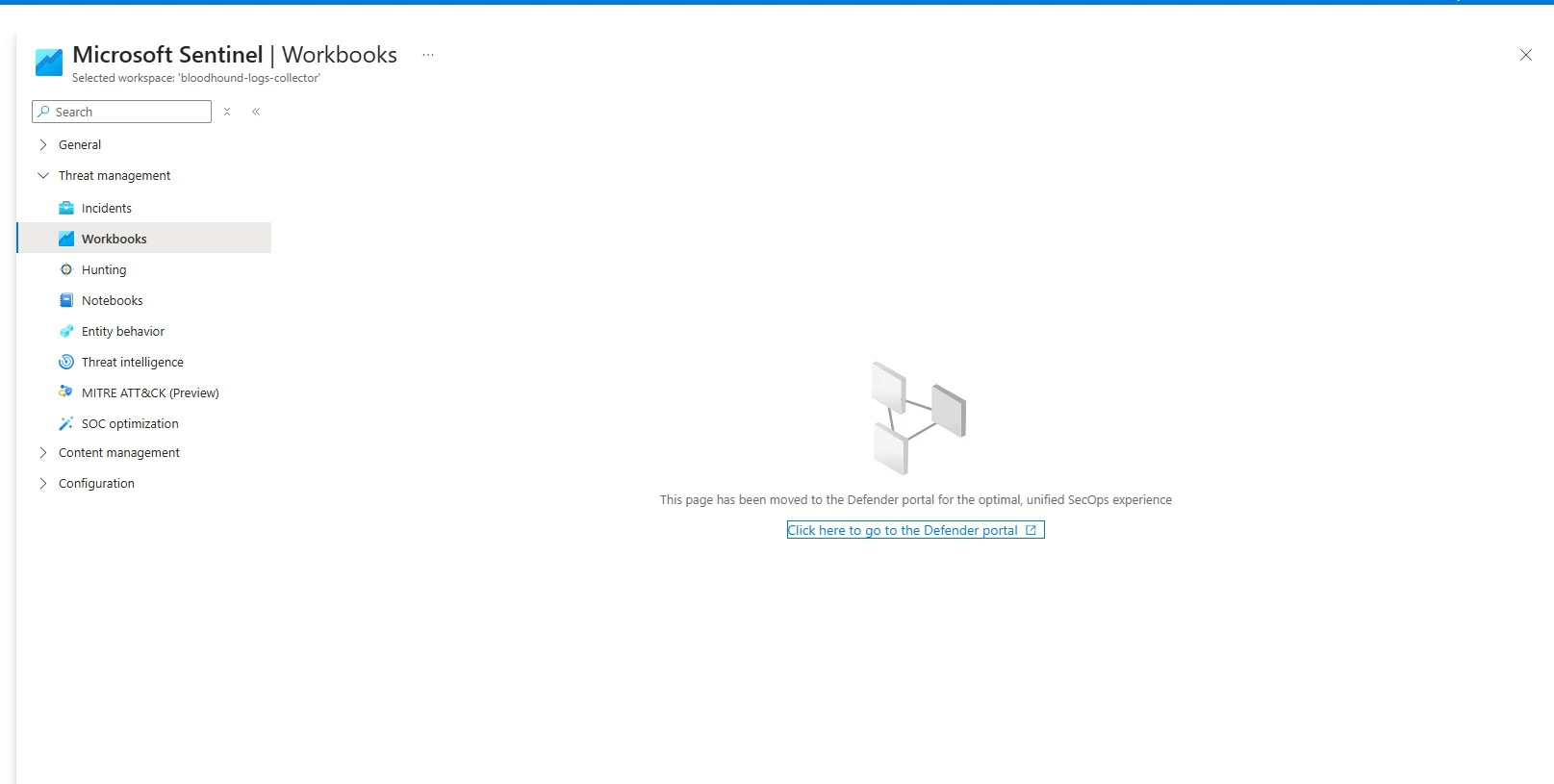

- In the Azure Portal, open Microsoft Sentinel and select the workspace where you deployed the template.

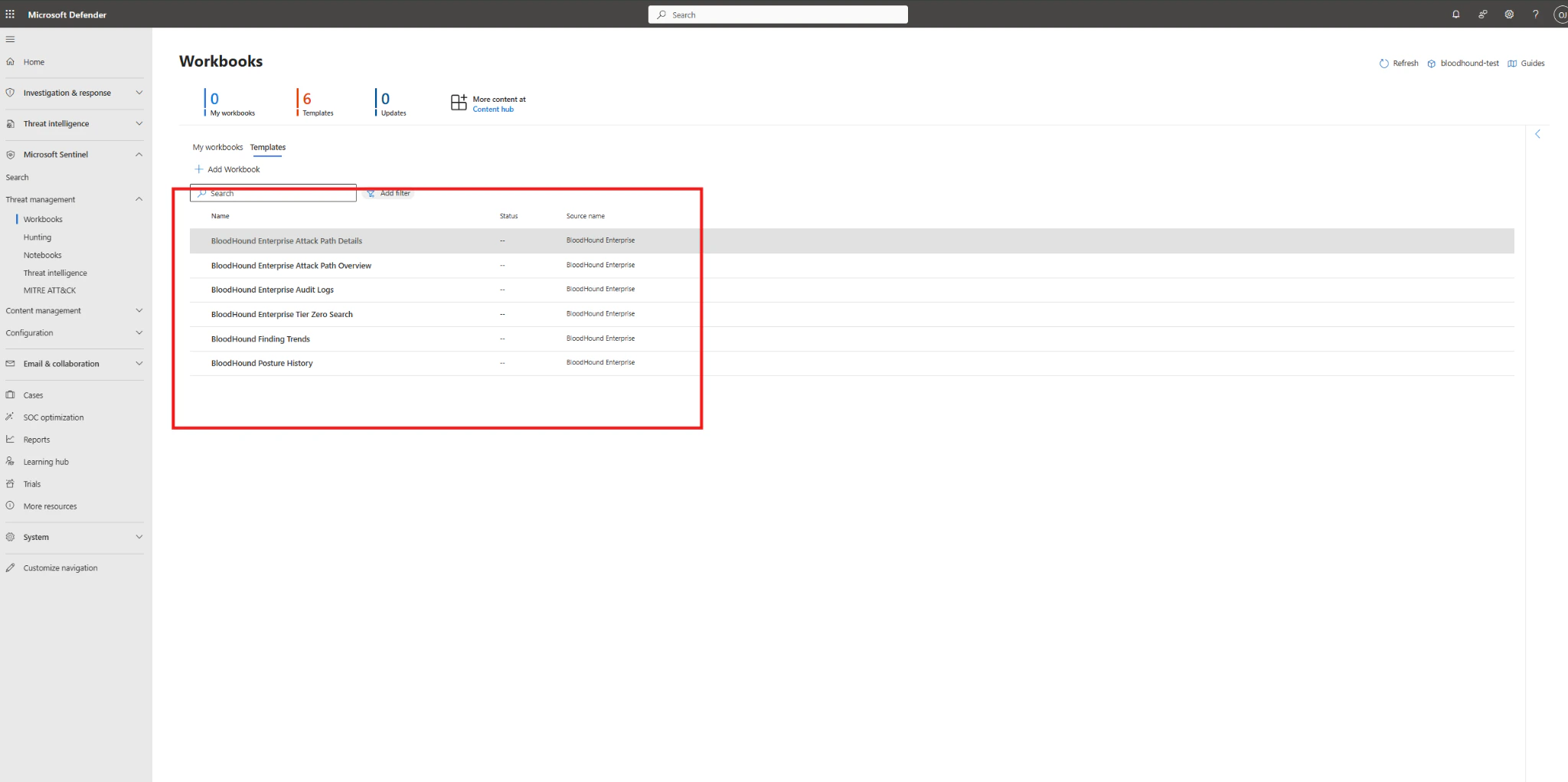

- Go to Workbooks under Threat management.

-

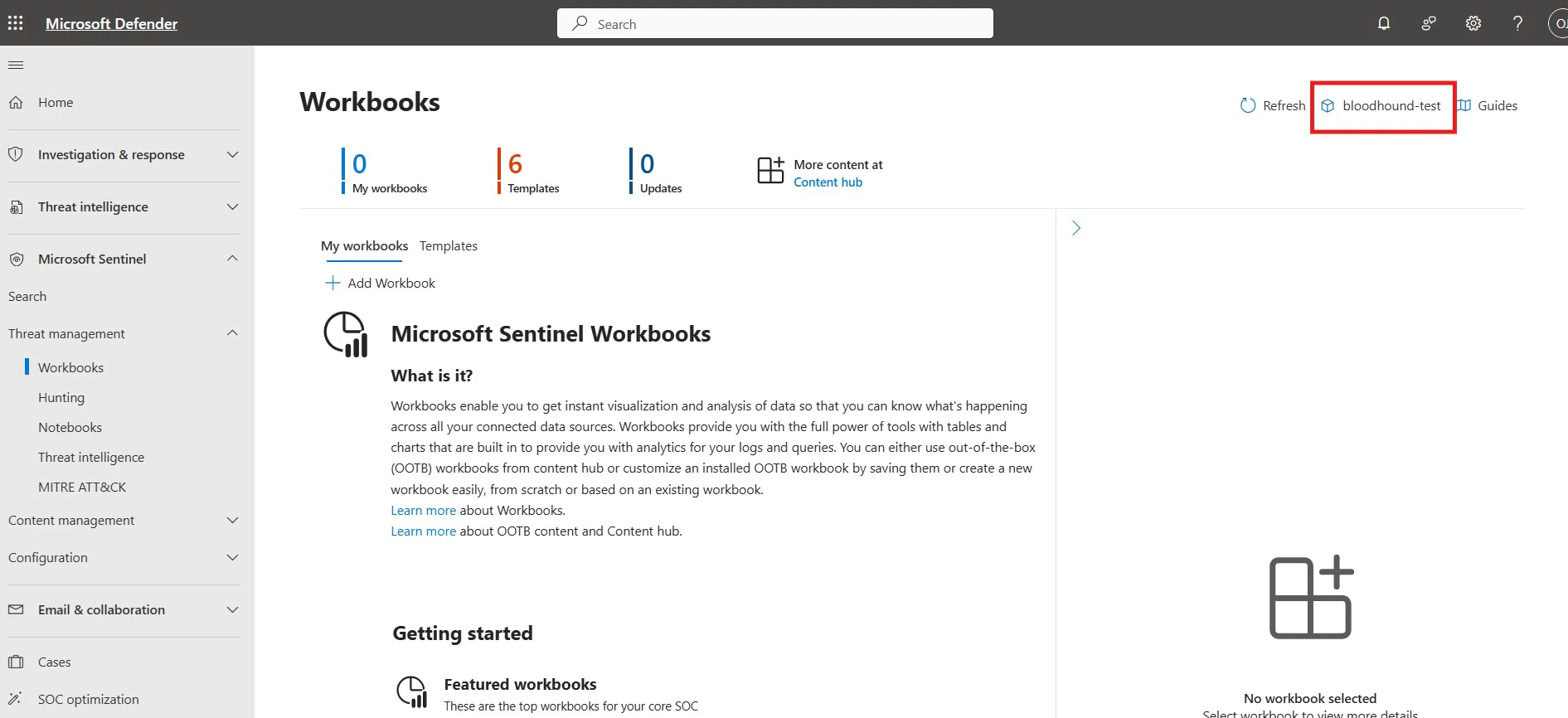

If prompted to continue in Microsoft Defender, select the link to open Microsoft Defender portal.

-

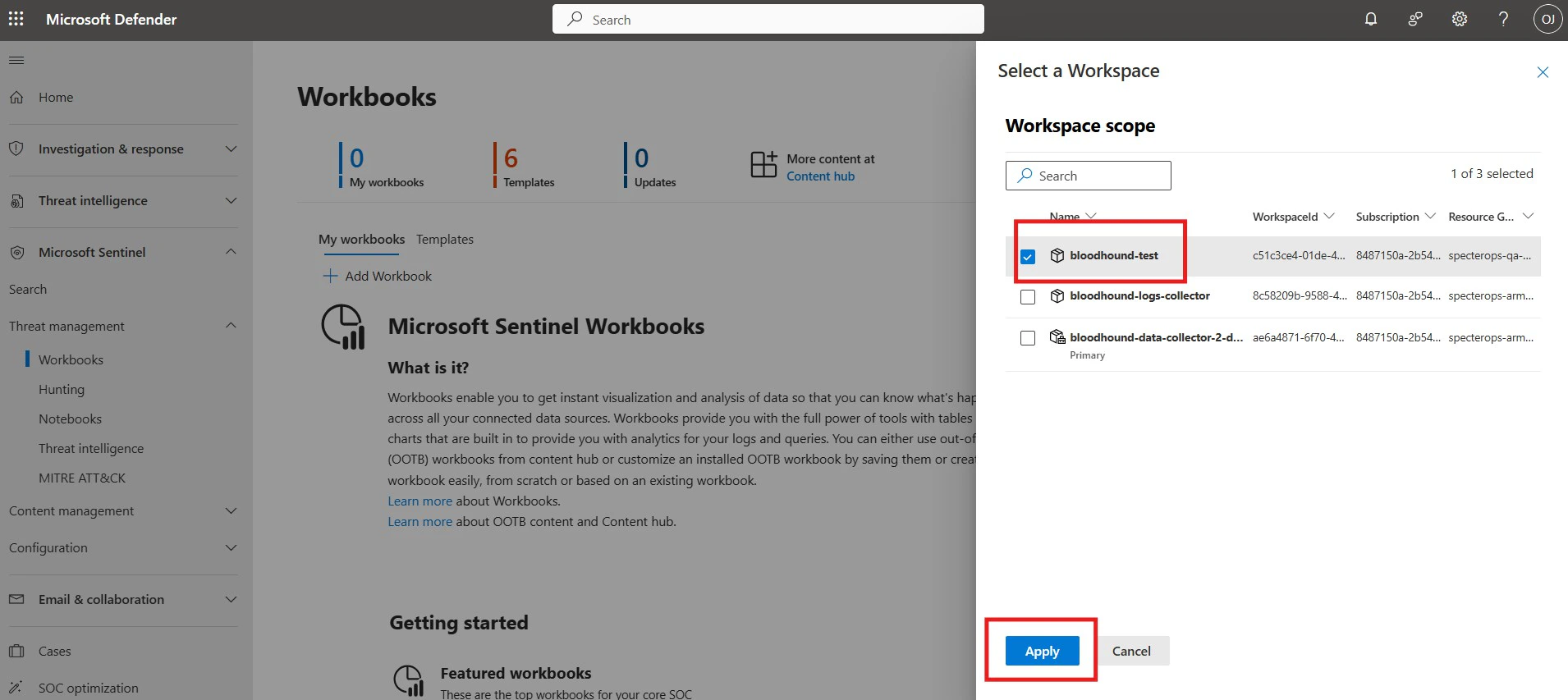

If multiple Sentinel workspaces are available, select the integration workspace from the workspace selector in the top-right corner.

-

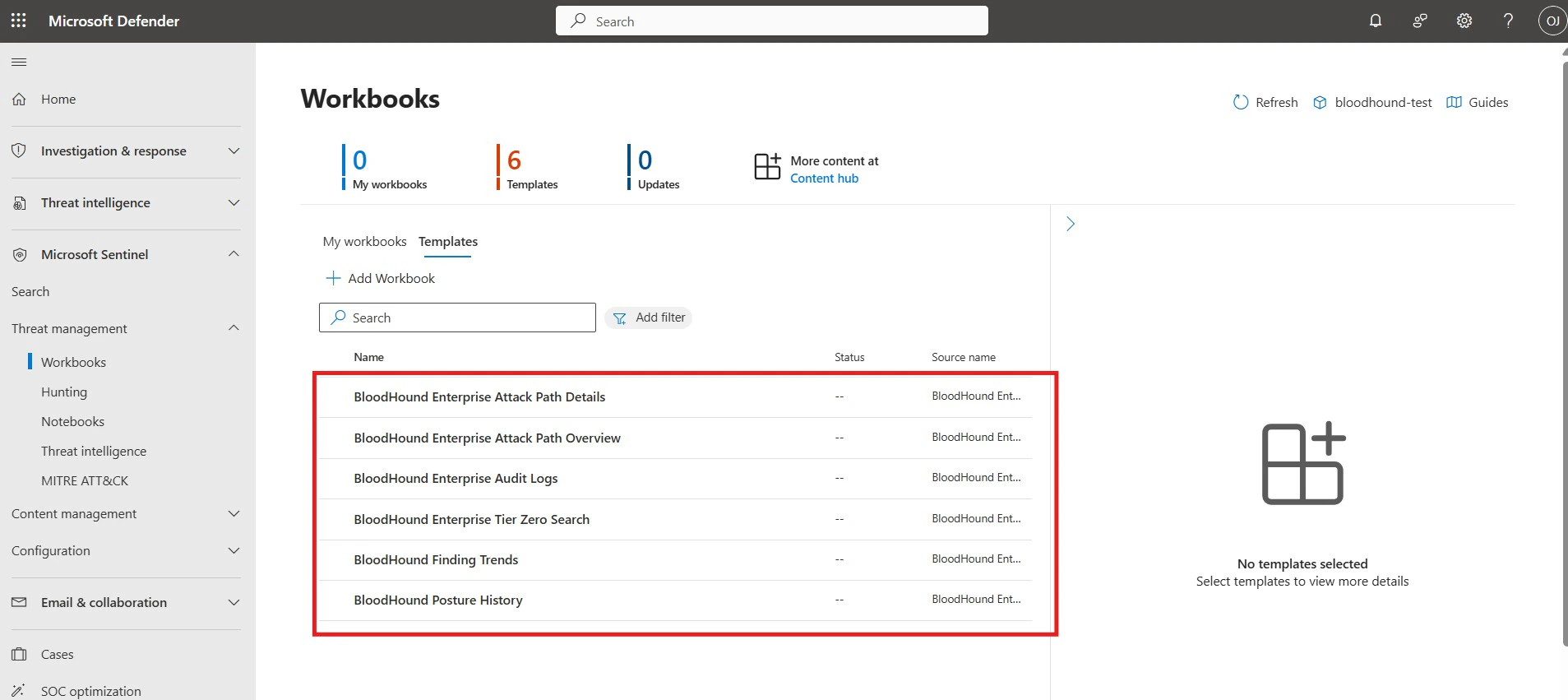

In Workbooks, open the Templates tab and verify the BloodHound workbook templates are available.

-

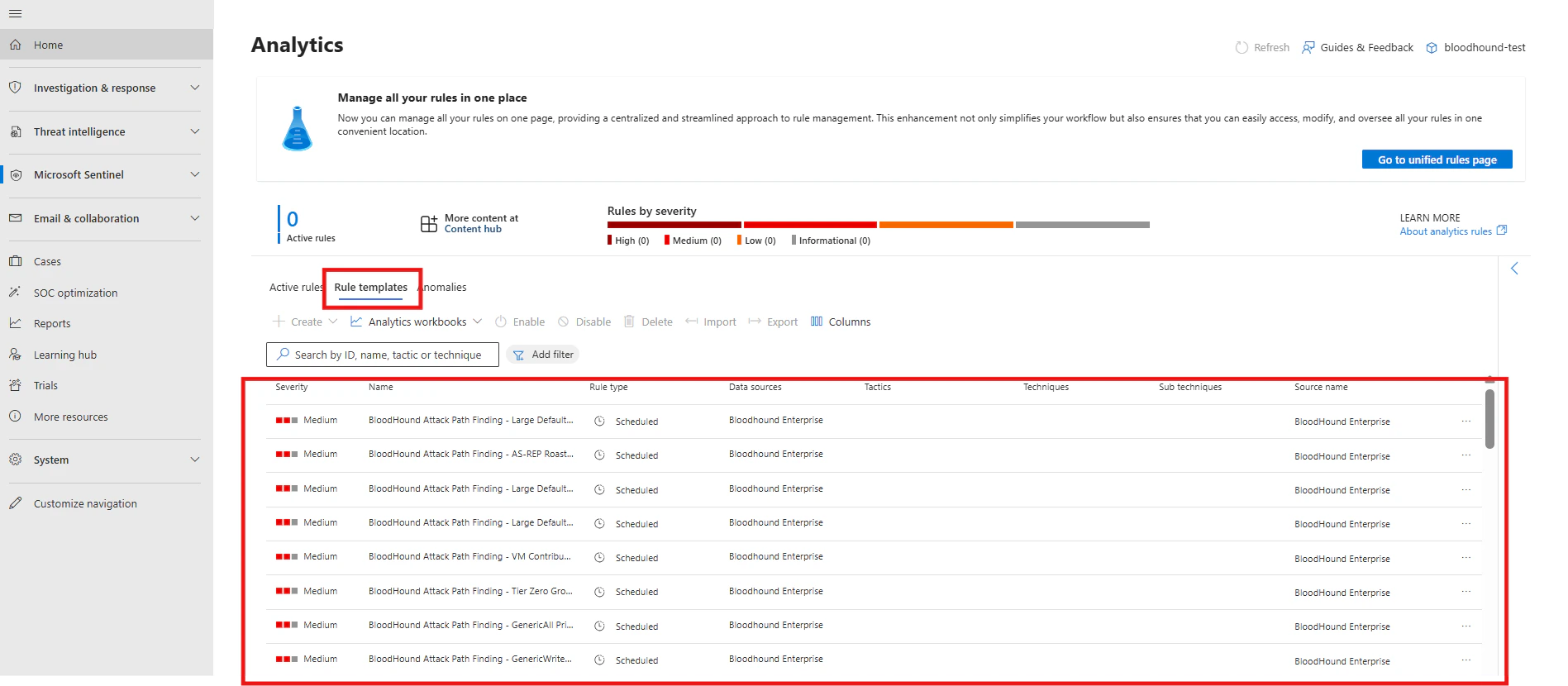

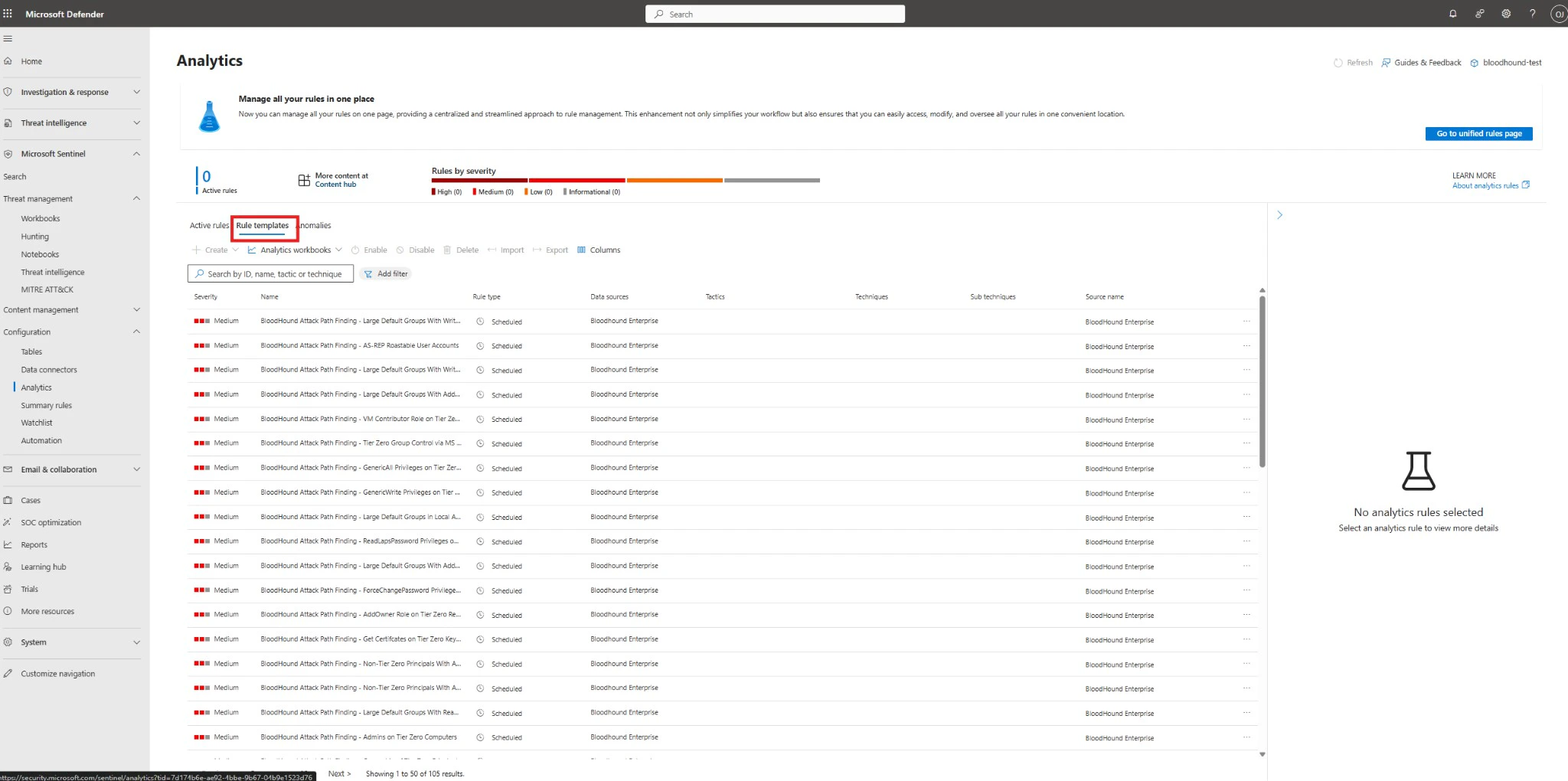

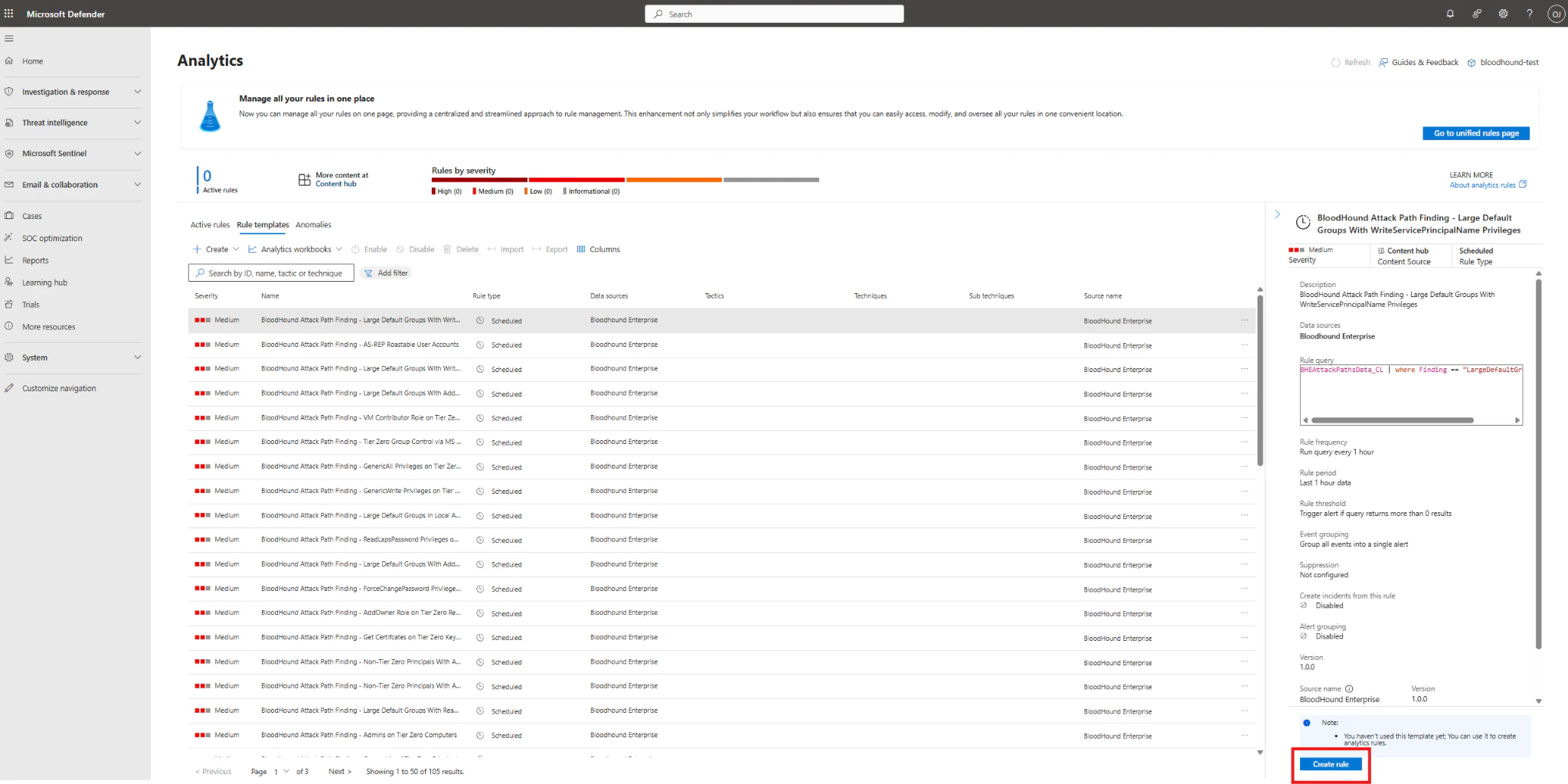

Go to Configuration > Analytics > Rule templates and verify the BloodHound analytics rules are available.

-

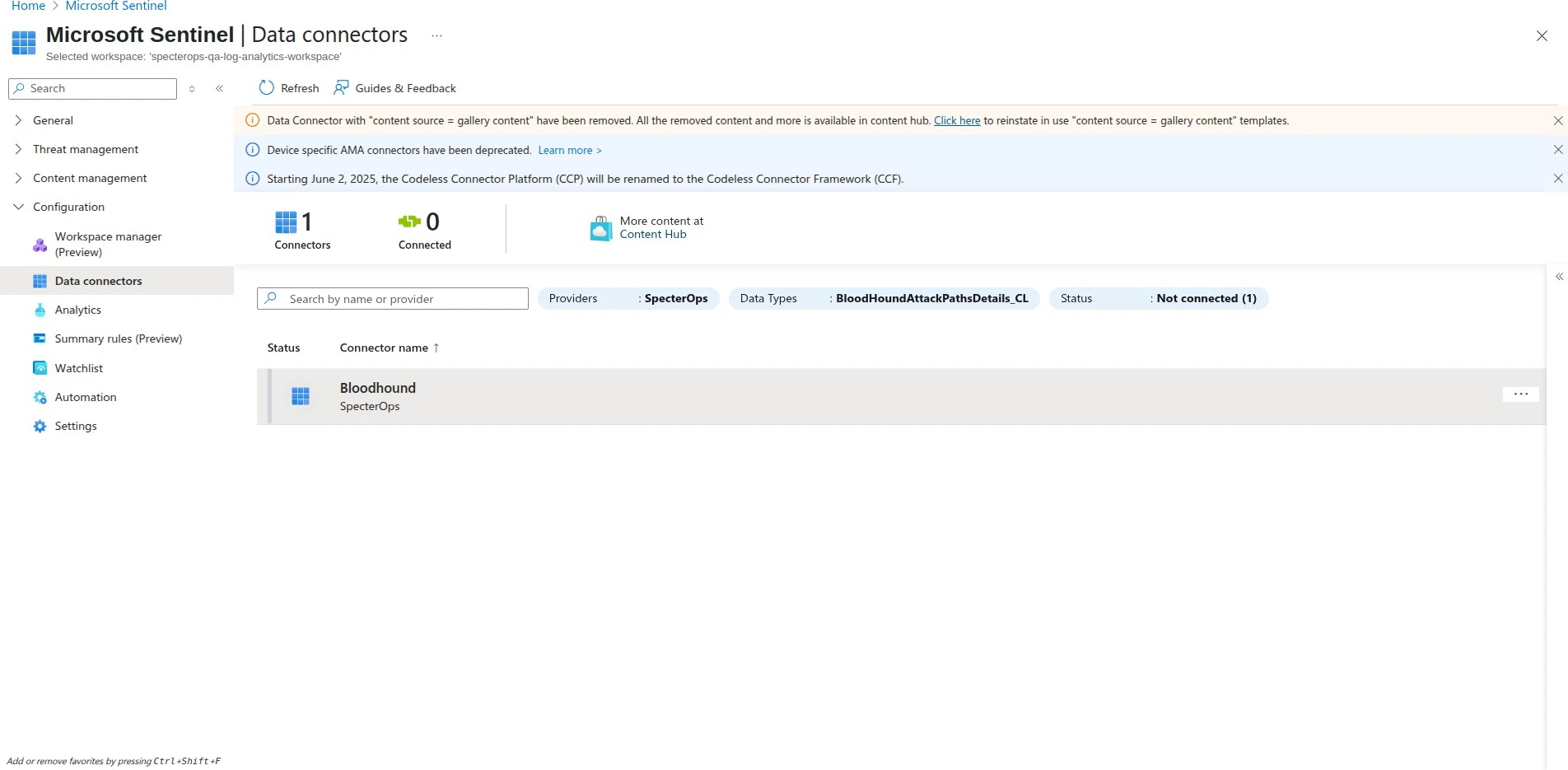

Go to Configuration > Data connectors and verify the BloodHound Data Connector is listed and connected.

Deploy the data connector template

After deploying the workbook and analytics rules template, configure the data connector with your BloodHound Enterprise API credentials and settings.

- Log in to the Azure Portal with an account that has the Owner role on the resource group.

- Click the following link to open a preloaded ARM template in the Azure Portal: Deploy to Azure.

-

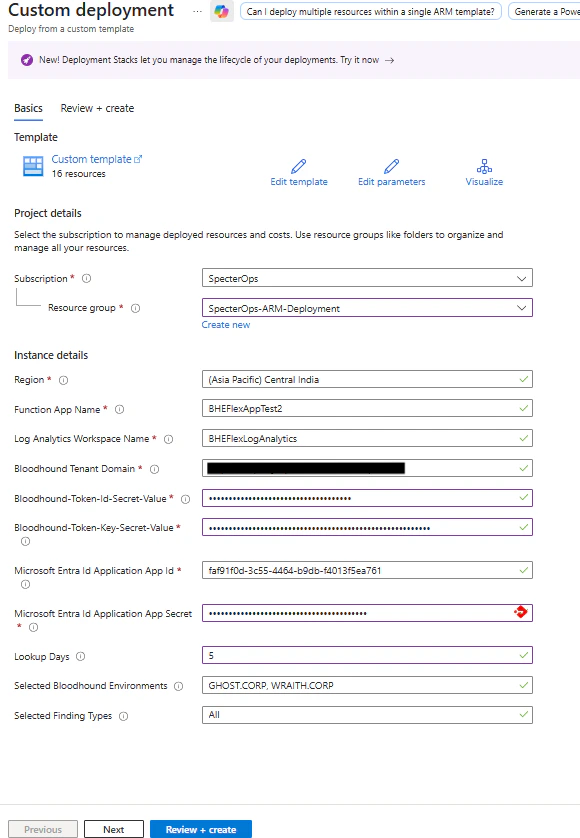

Confirm the template opens on the Custom deployment page.

Configure data connector parameters

Fill in the deployment parameters:

| Parameter Name | Description |

|---|---|

| Subscription | The Azure subscription to deploy the resources to. |

| Resource Group | The name of the resource group where the resources will be deployed. |

| Function App Name | The name of the Azure Function App. This must be unique across Azure, since each instance requires its own Function App (for example, BloodHoundEnterprise-Maple). |

| Log Analytics Workspace Name | The name of the existing Log Analytics Workspace where you want to create a Data Collection Endpoint (DCE) and Data Collection Rule (DCR) for custom tables. |

| Bloodhound Tenant Domain | The URL for the BloodHound Enterprise tenant domain. |

| Bloodhound Token ID Secret Value | The value for the BloodHound token ID. This value will be stored in an Azure Key Vault secret. |

| Bloodhound Token Key Secret Value | The value for the BloodHound token key. This value will be stored in an Azure Key Vault secret. |

| Microsoft Entra Id Application App Id | The unique identifier for the Microsoft Entra ID application. This ID, also known as the Client ID, is used to authenticate your application to the Microsoft identity platform. |

| Microsoft Entra ID Application App Secret | A confidential secret generated for your Microsoft Entra ID application. This secret, also known as the Client Secret, is used along with the App ID to prove the application’s identity when requesting an access token. |

| Lookup Days | Specifies the number of days in the past for which the system should fetch data. A higher value means more historical data will be retrieved, which increases the time and compute resources required during the first iteration when setting up the system. This parameter sets the default lookback period when no previous timestamp is available. |

| Selected Bloodhound Environments | The selected BloodHound environments from which you want to fetch data. These should be provided as comma-separated values (e.g., Ghost.Corp, Phantom.Corp). The default value is All. |

| Selected Finding Types | The selected Finding Types from which you want to fetch data. These should be provided as comma-separated values (e.g., T0MarkSensitive, T0GenericAll). The default value is All. |

- Select the target subscription and resource group, then enter deployment parameters such as workspace name and workspace location.

- Click Review + create.

- Click Create to deploy the workbook and data connector resources.

Deploy the data connector code

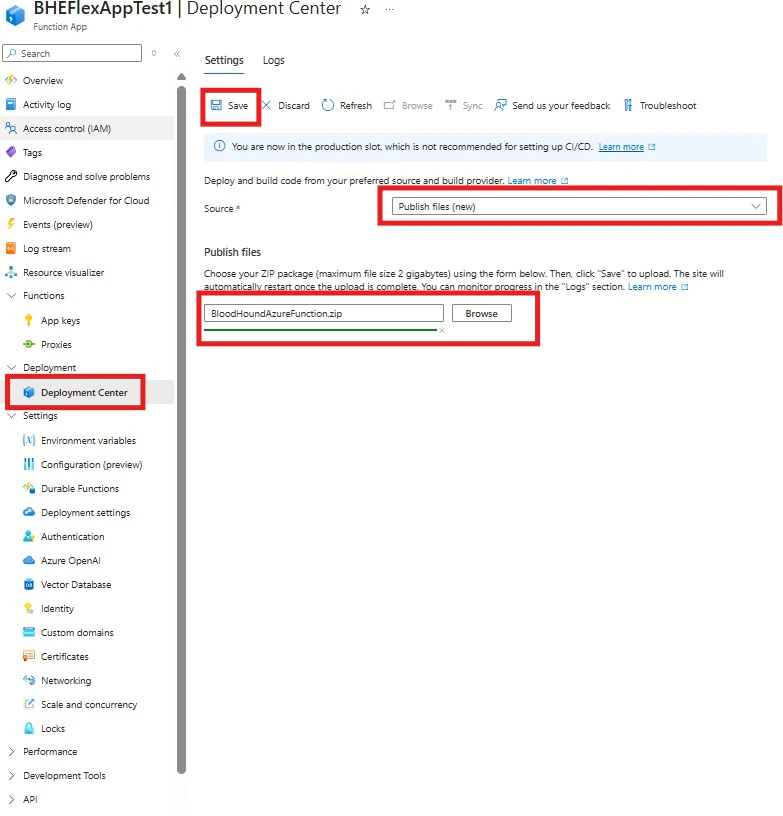

The ARM template will deploy the necessary Azure resources for the data connector, but you will also need to deploy the Azure Function code that fetches data from BloodHound Enterprise and ingests it into Microsoft Sentinel.

-

Download the

BloodHoundAzureFunction.ziparchive from the GitHub repository. - Open your Function App in Azure Portal.

-

In the left menu, select Deployment Center under the Deployment section.

You will see multiple options for deployment, including:

- GitHub: Connect your GitHub repository for continuous deployment.

- Azure Repos: Connect your Azure DevOps repository for continuous deployment.

- Publish files: Manually upload your function code for one-time deployment.

-

Select Publish files, select the downloaded

BloodHoundAzureFunction.ziparchive, and click Save.After deployment, you should see the function code in the Functions section of your Function App.

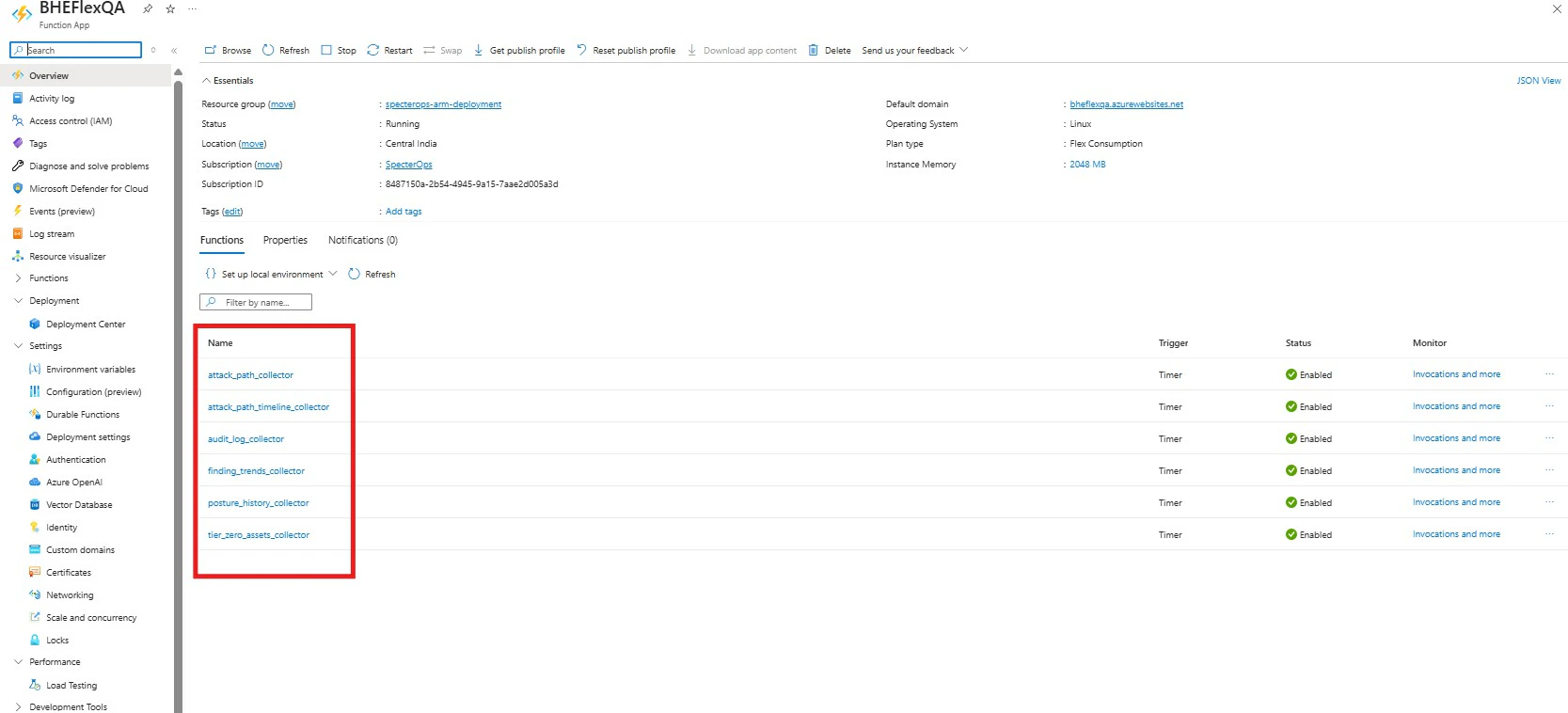

Verify the deployment

After deploying the Azure Function code, manually run each function to verify that the functions are running correctly and able to fetch data from BloodHound Enterprise and push it into the custom tables in your Log Analytics Workspace.

-

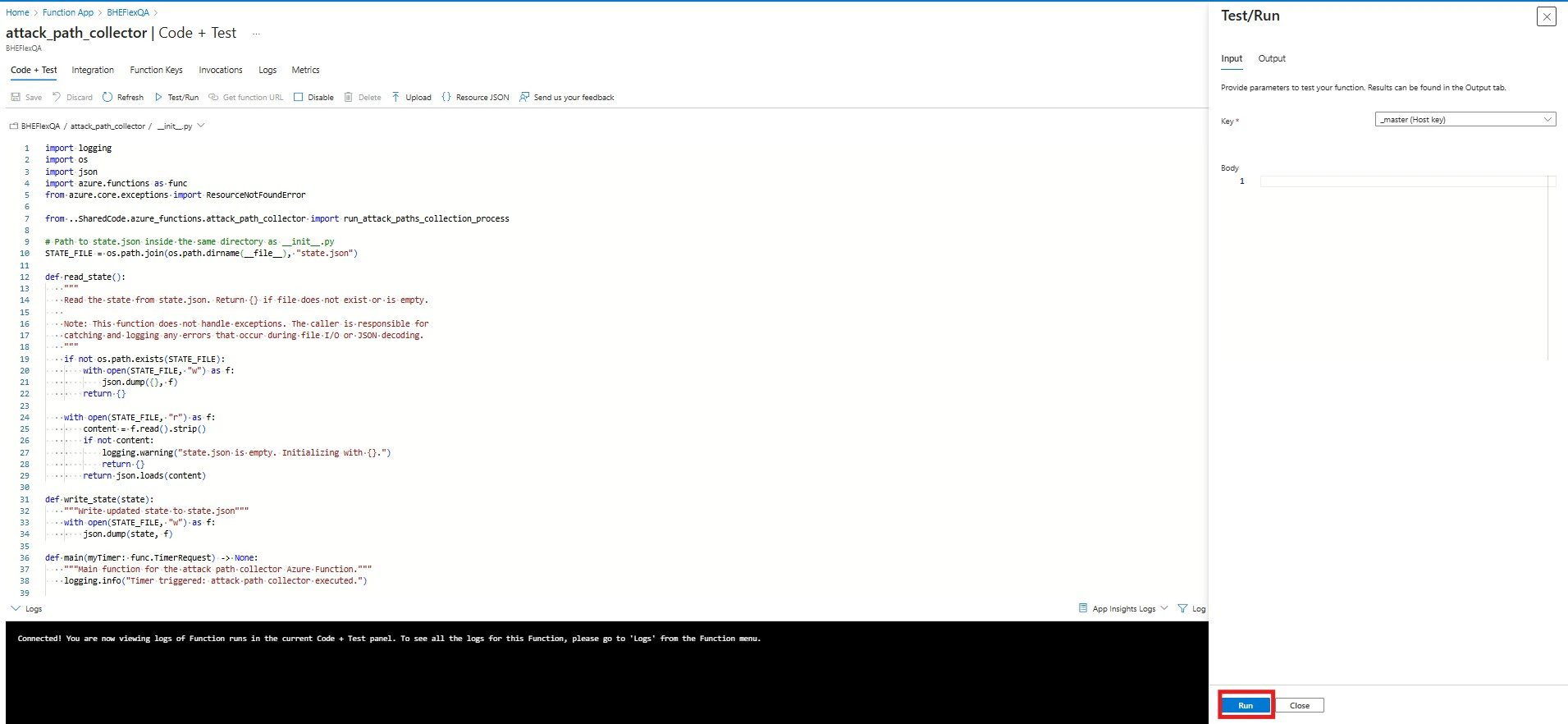

Navigate to the Overview page of your Function App and select one of the deployed functions.

- Click the Code + Test tab.

- Click Test/Run.

-

Click Run to execute the function.

-

Monitor the execution logs to confirm that the function is running successfully and fetching data from BloodHound Enterprise.

You should see log entries indicating successful execution and data retrieval for each finding type.

Show example

- Repeat this one-time manual process for each deployed function to ensure all functions are working correctly.

Validate the integration

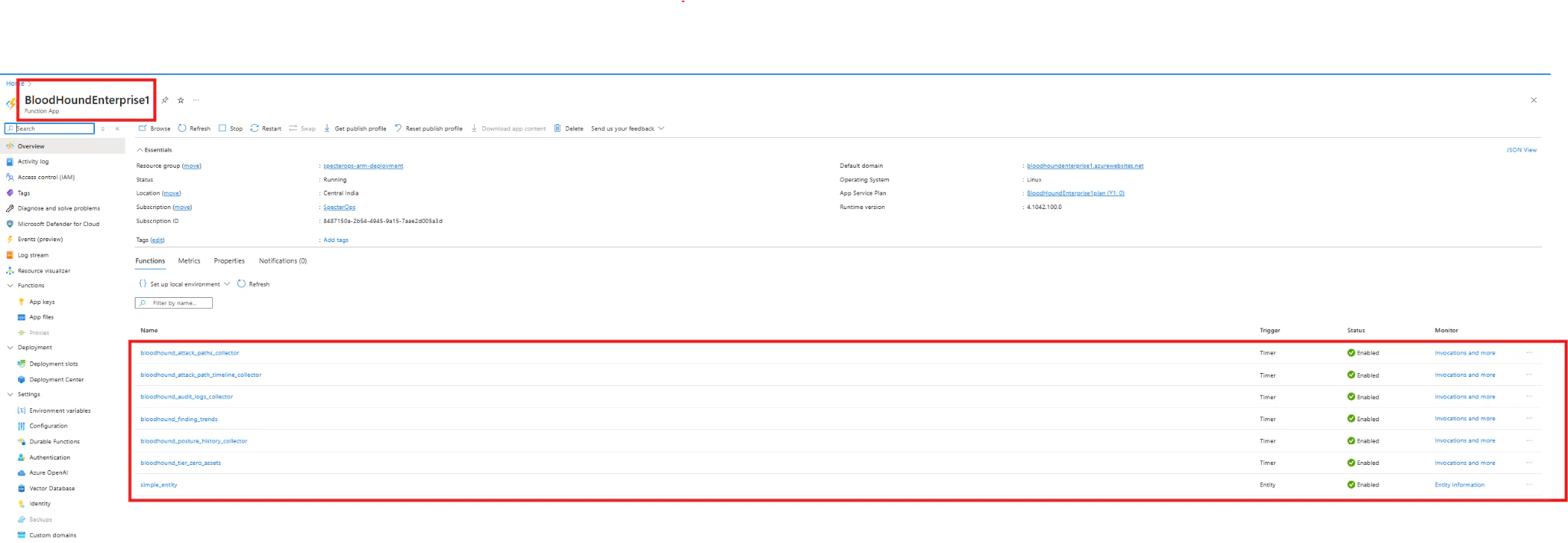

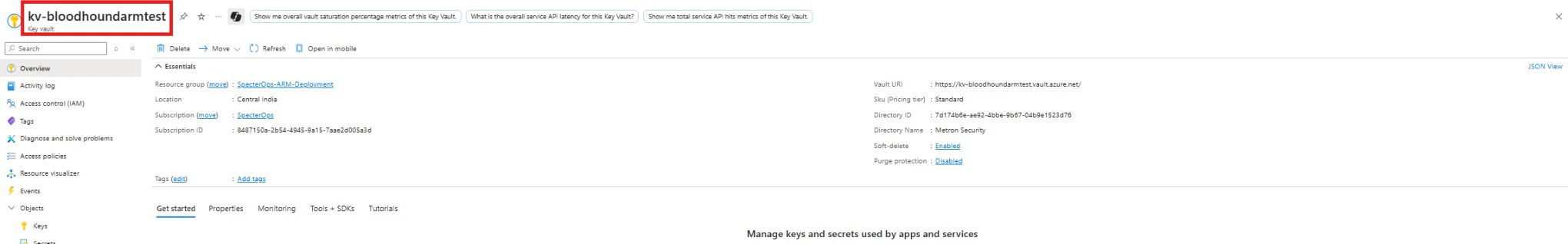

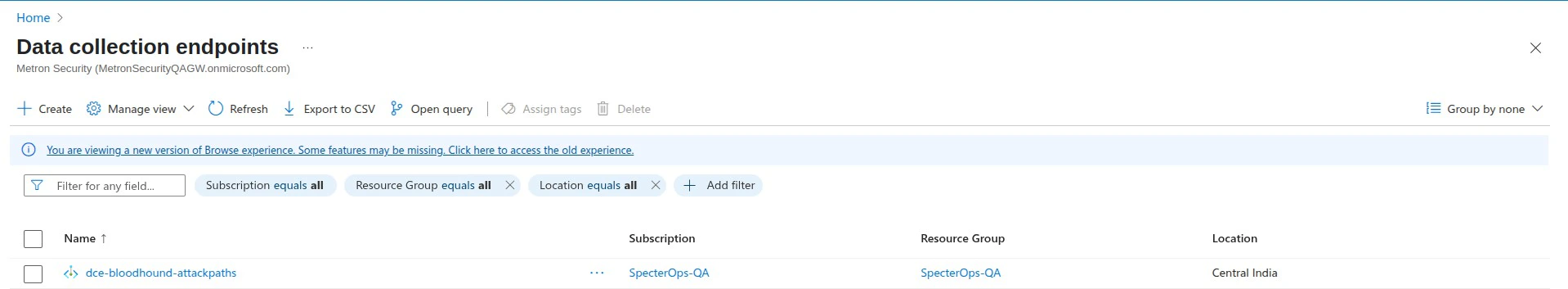

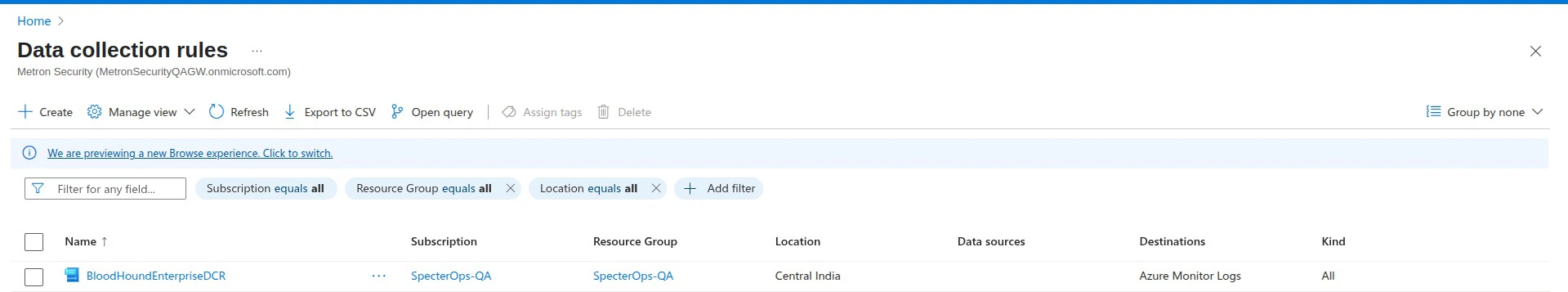

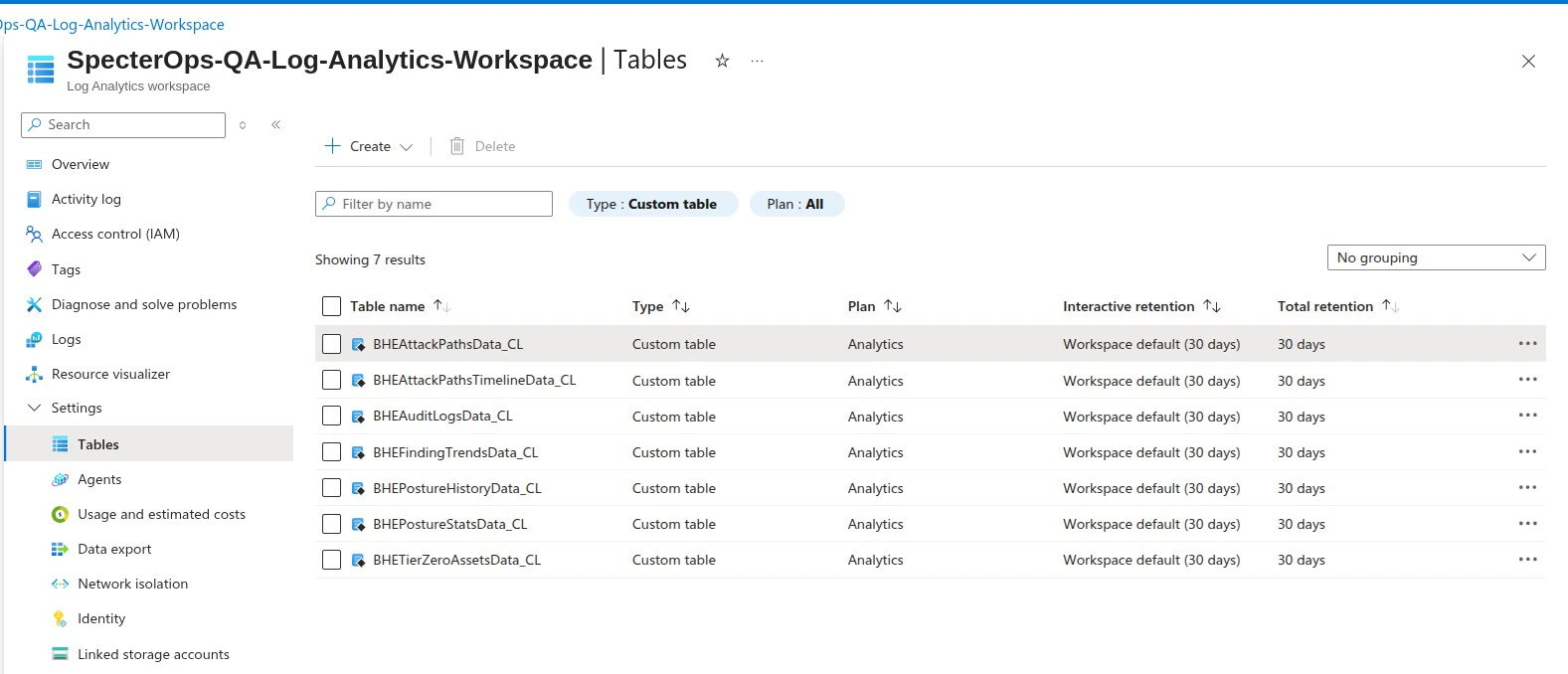

Complete verification before operational use.Verify connector resources

-

In Azure Portal, open Function App and confirm your deployed app exists and all BloodHound functions are listed.

-

Open Key Vault and confirm the connector vault exists and includes the expected secrets.

-

Open Data Collection Endpoints and confirm the BloodHound endpoint exists.

-

Open Data Collection Rules and confirm the BloodHound rules exist.

-

Open your Log Analytics workspace and confirm these custom tables exist:

BHEAttackPathsData_CLBHEAttackPathsTimelineData_CLBHEAuditLogsData_CLBHEFindingTrendsData_CLBHEPostureHistoryData_CLBHETierZeroAssetsData_CL

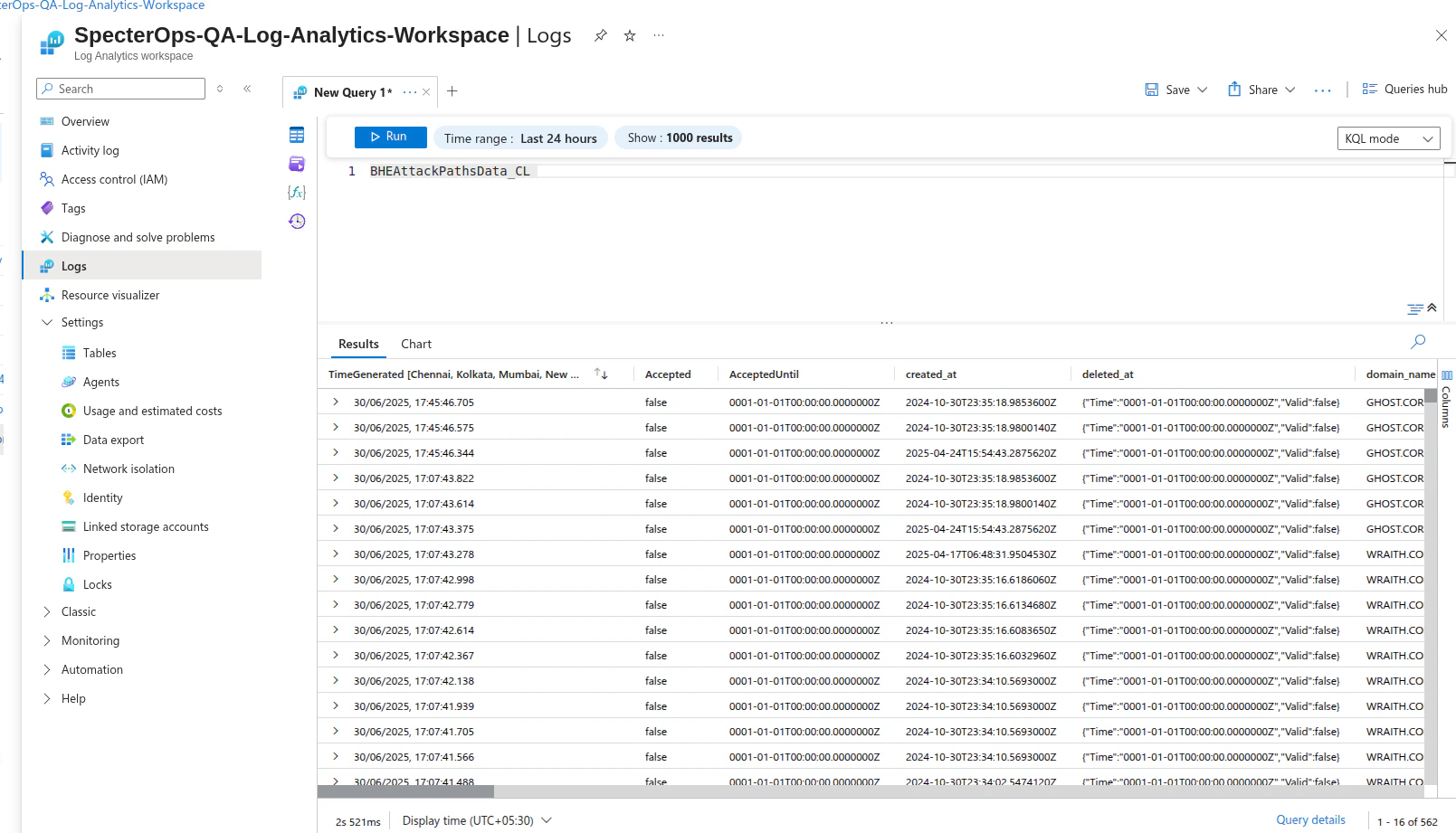

Verify data connector ingestion

Complete these steps to start your Azure Function App and begin ingesting BloodHound Enterprise data into custom tables.

- Open your Function App and start it from Overview if it is stopped.

- Open your Log Analytics workspace and click Logs.

-

Verify that you can see custom logs.

Activate dashboards and analytics rules

-

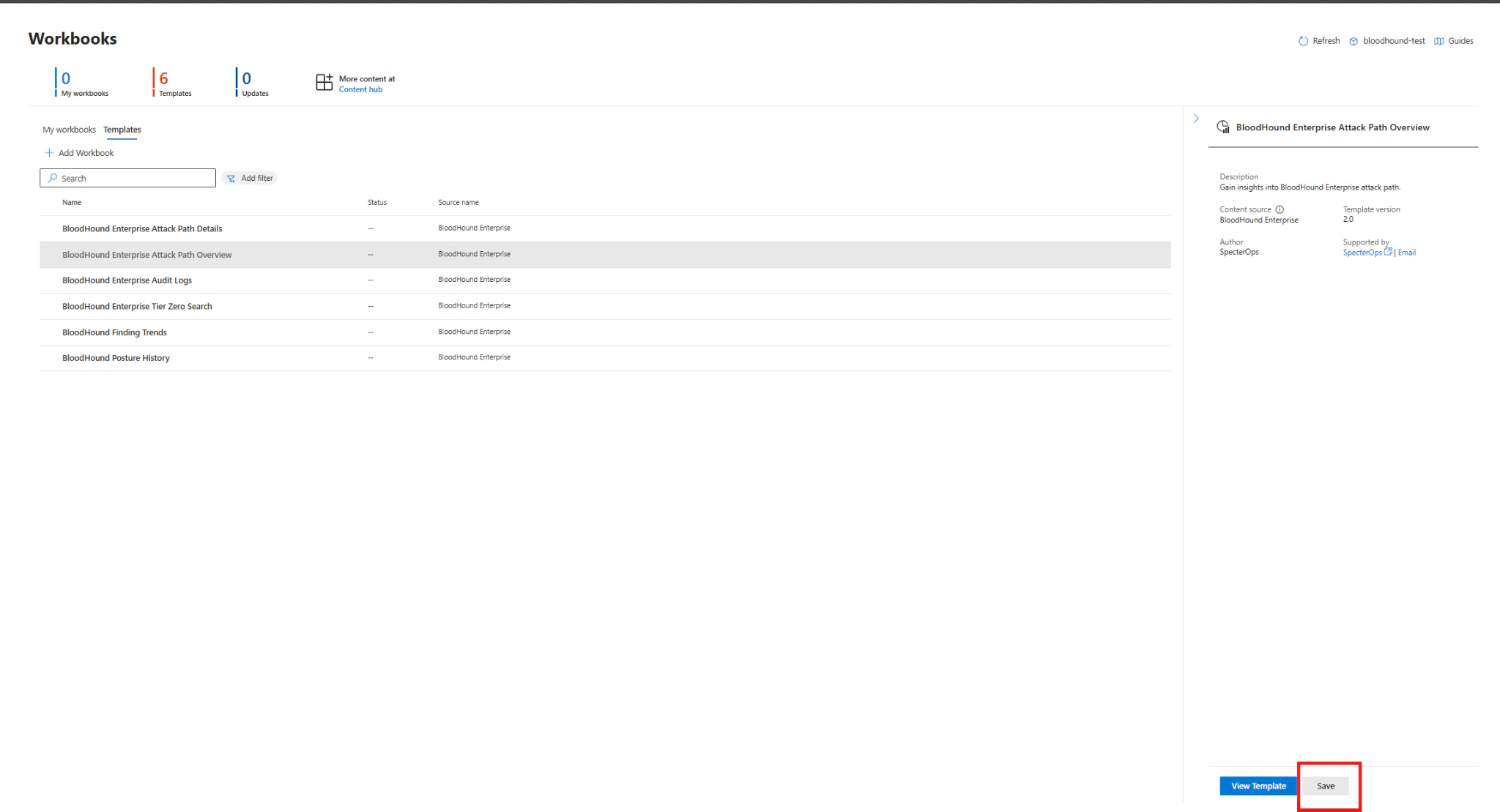

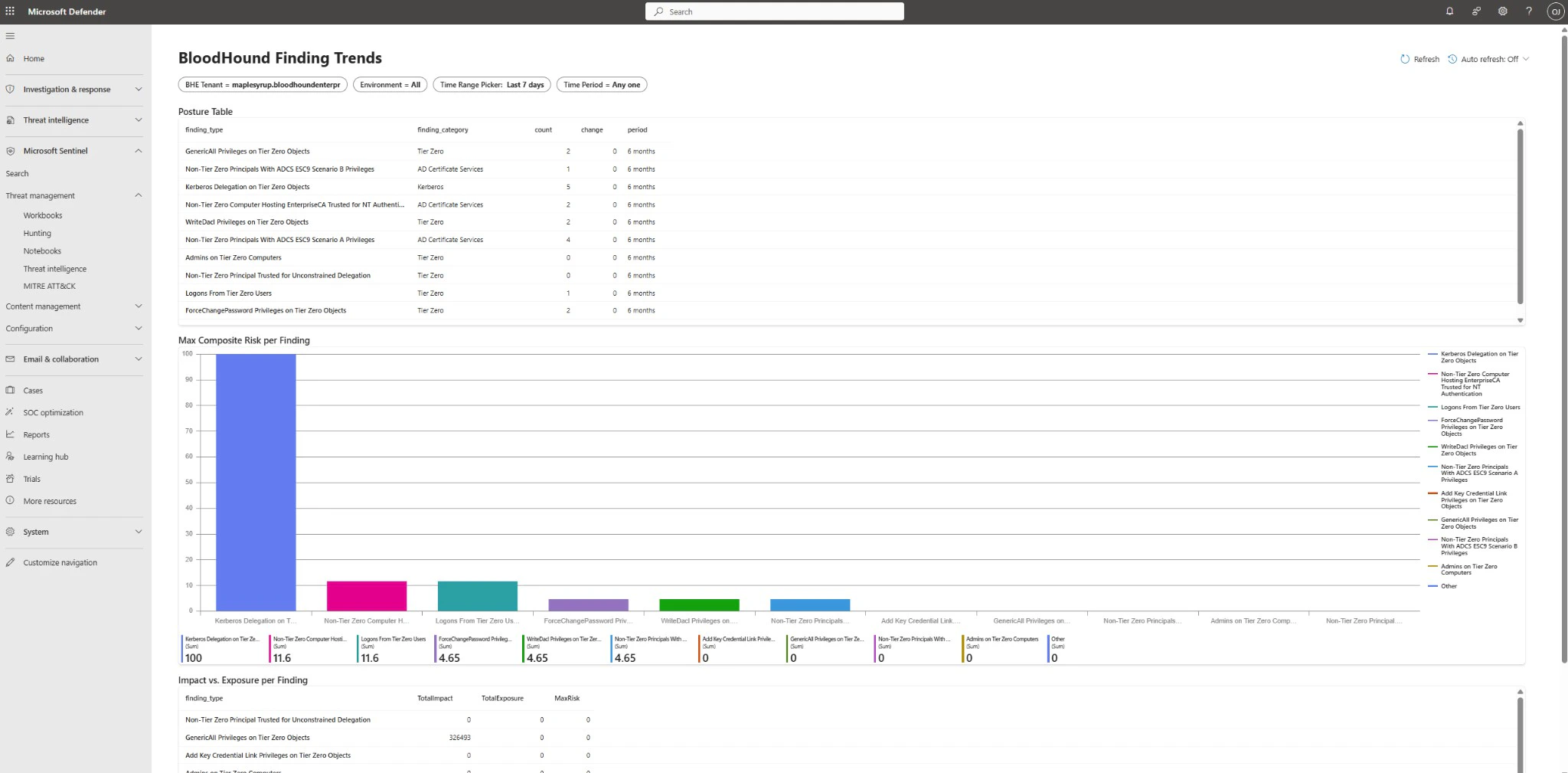

In Microsoft Sentinel, go to Workbooks. You must save each workbook before editing or operational use.

-

To save each workbook, double-click the workbook, then click Save in the modal that displays.

-

Open each workbook to confirm it loads data correctly. If you see errors, review the function execution logs and ensure the data connector is ingesting data into the custom tables.

Verify analytics rules

-

In the Sentinel workspace, navigate to Configuration > Analytics > Rule templates.

-

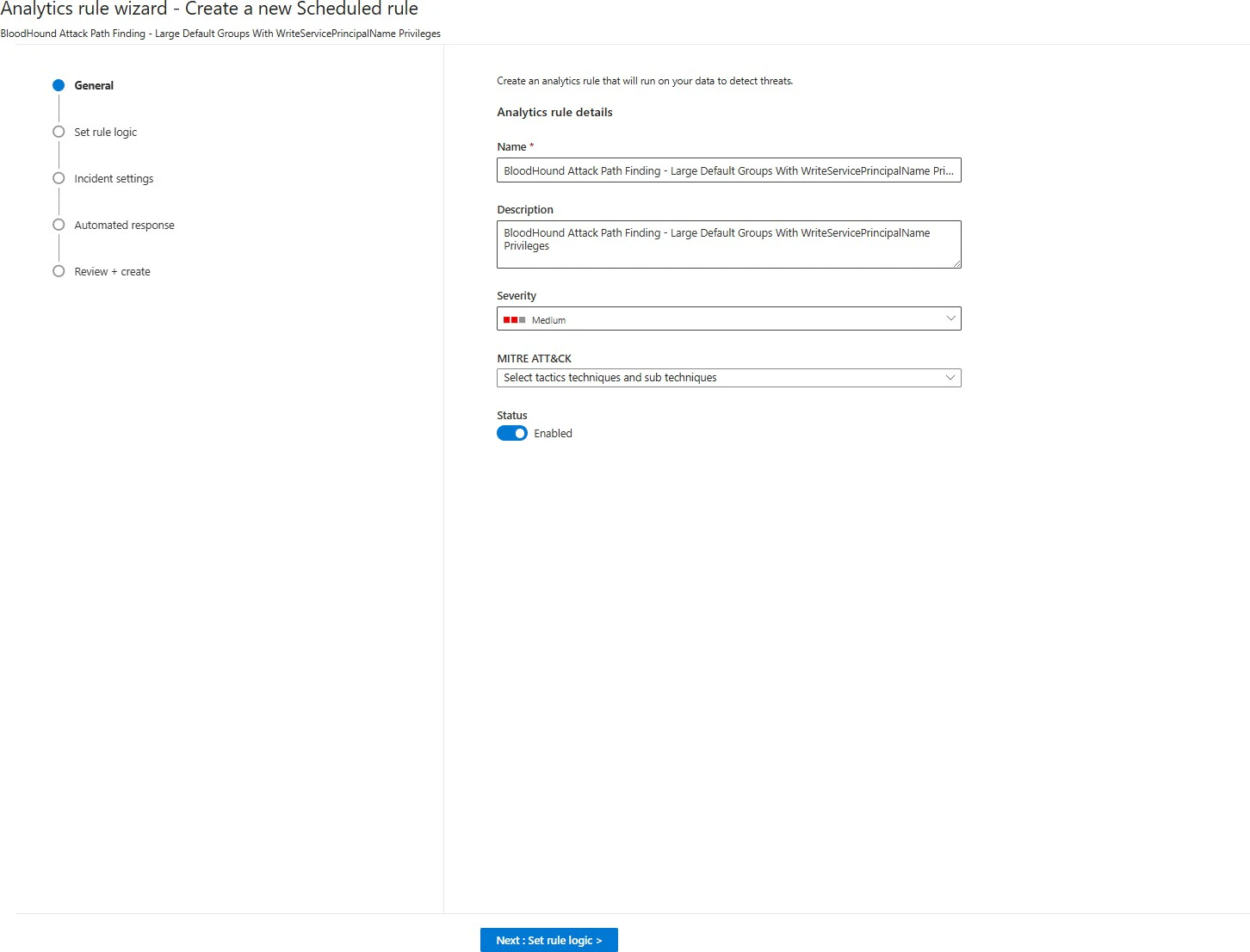

To generate incidents, create and save each Analytics rule. Select any rule to open the right-side panel, then click Create rule.

-

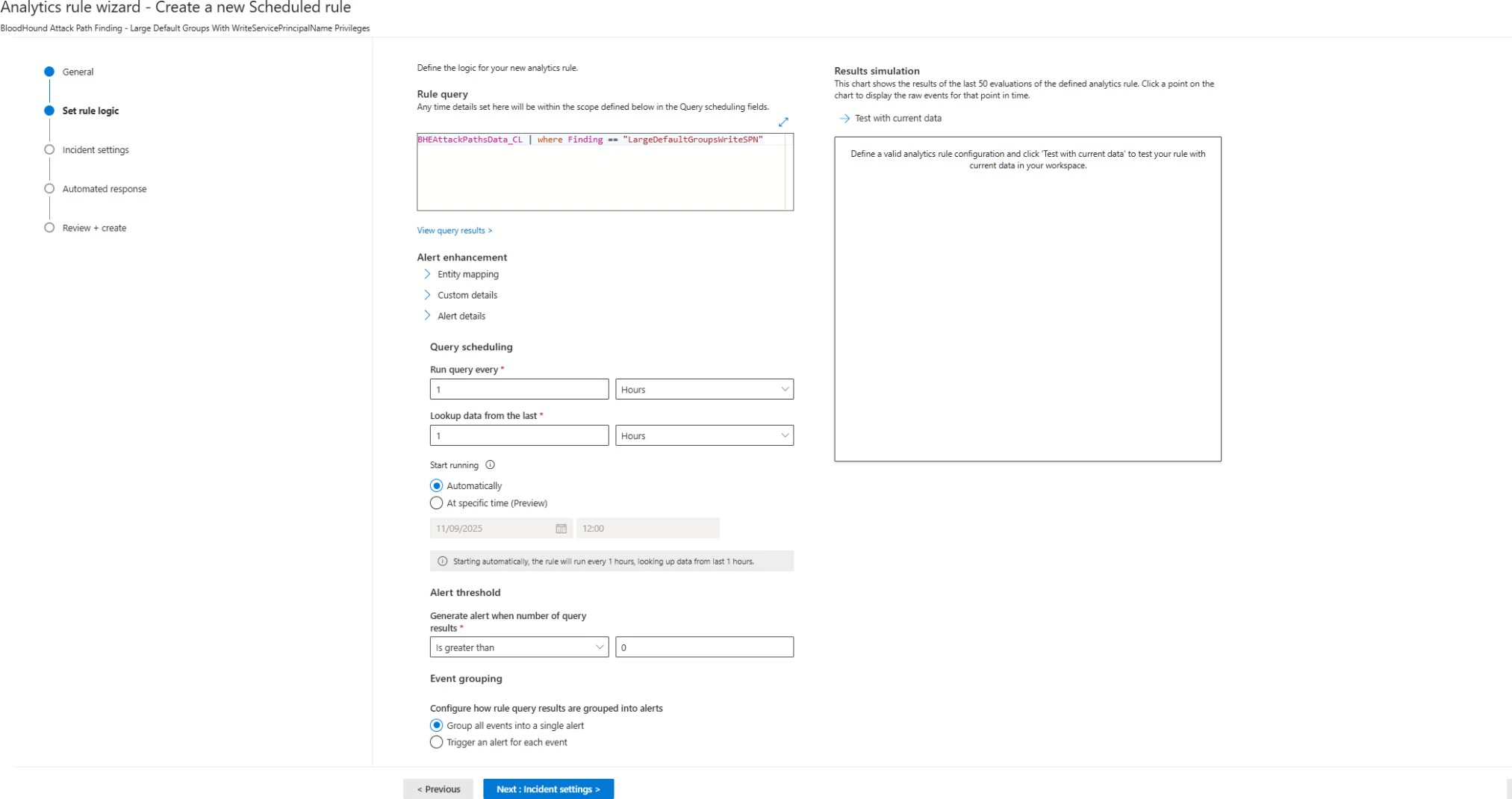

Click Next: Set rule logic and keep the default values.

-

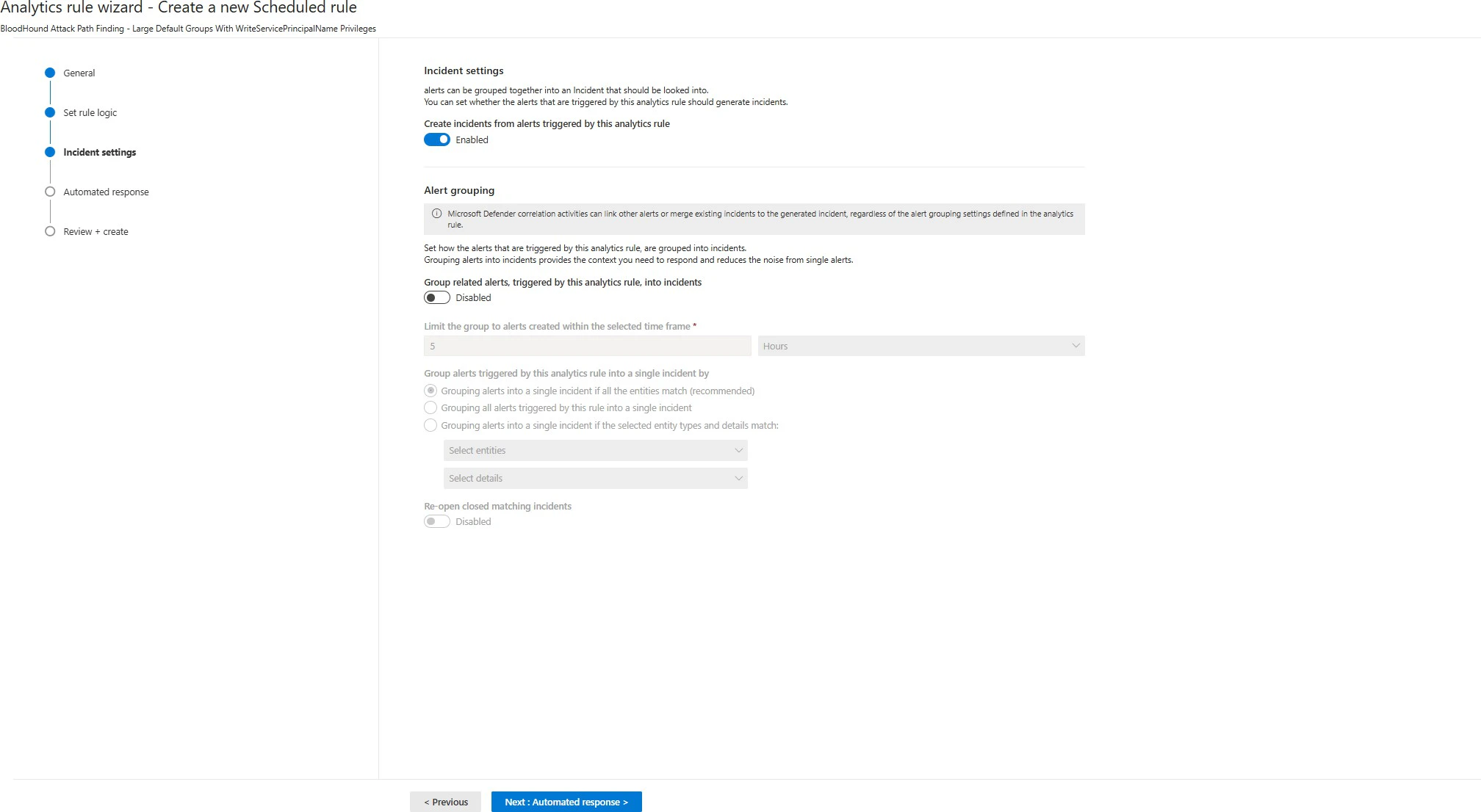

Click Next: Incident settings and keep the default values.

-

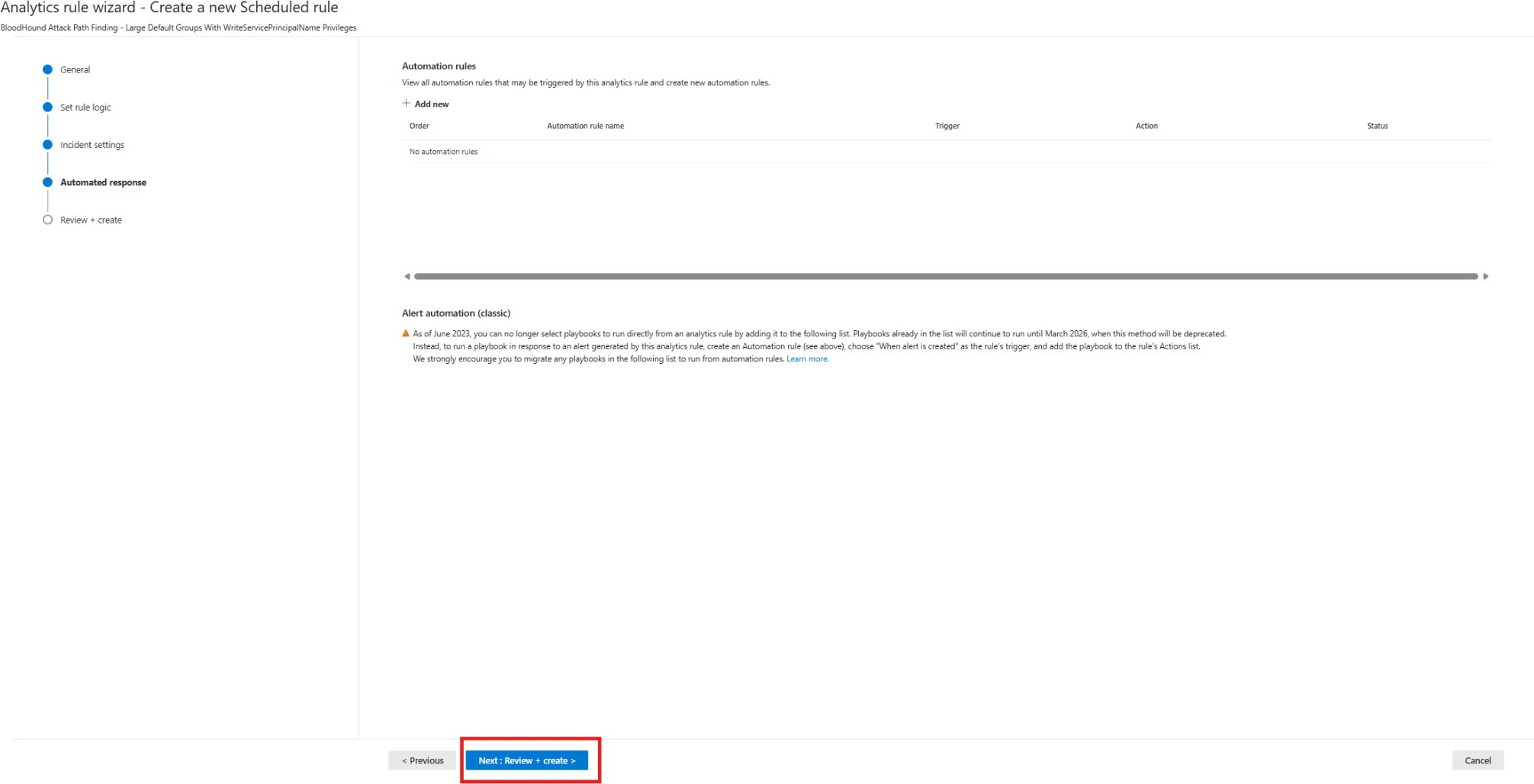

Click Next: Automated response and keep the default values.

-

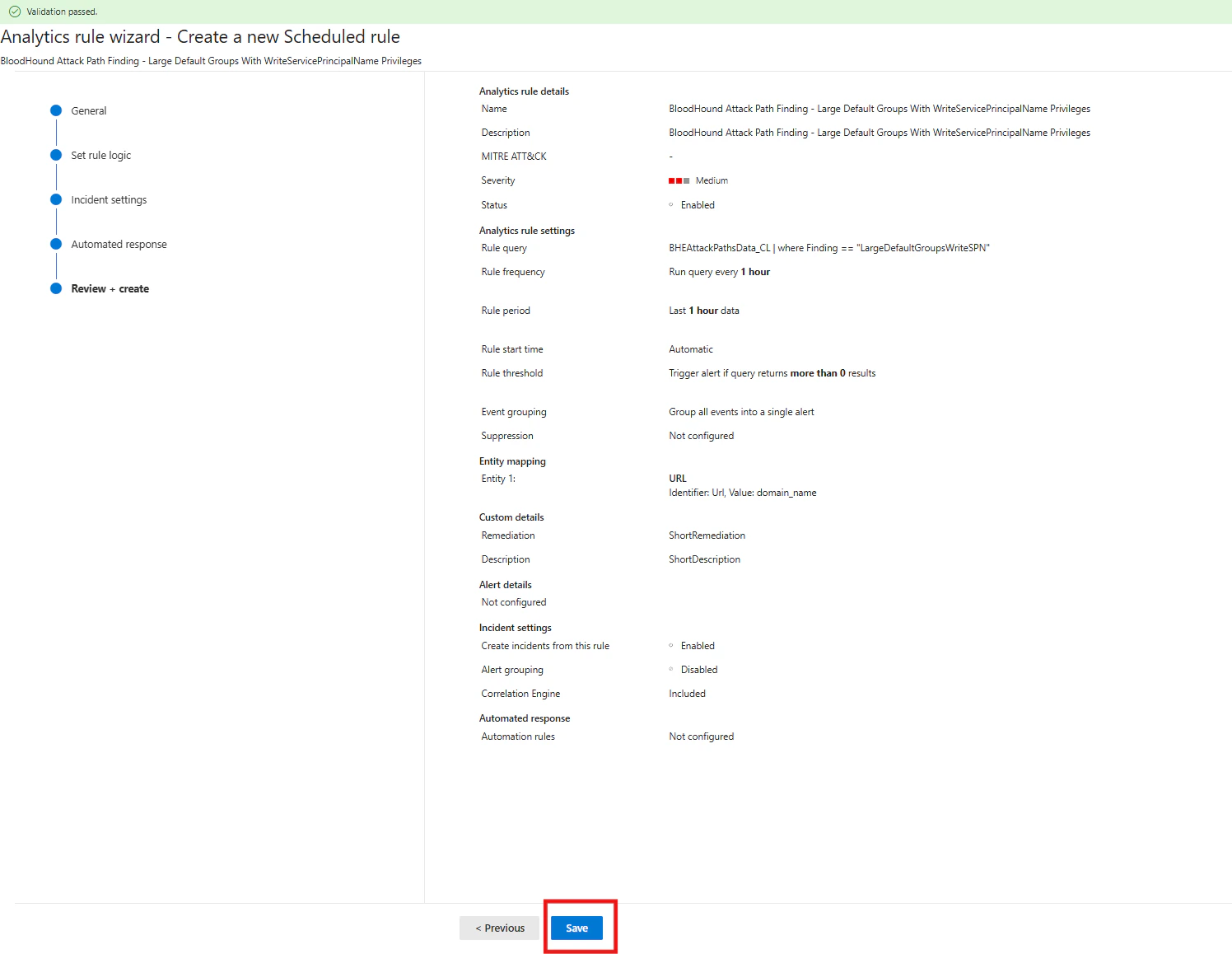

Click Next: Review + Create and keep the default values.

-

Click Save.

- Repeat this process for each Analytics rule. Incidents are generated only after the rules are created and saved.

-

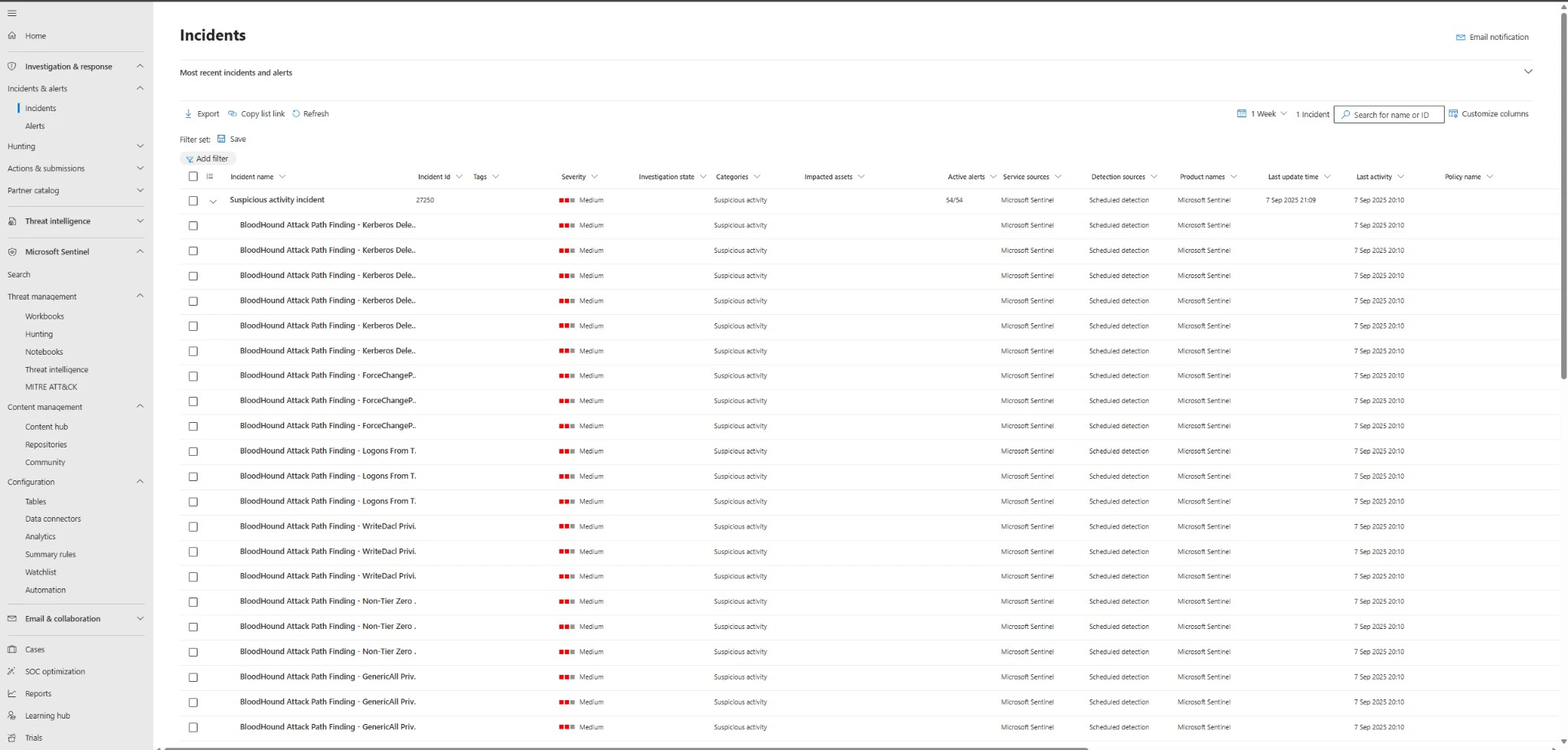

To check incidents after rules are created, navigate to Investigation & Responses > Incidents & Alerts > Incidents.